Published on December 10, 2024

The Prisoner's Dilemma is a classic problem in game theory. It shows how individual decisions, made in pursuit of self-interest, can lead to outcomes that are harmful for all parties involved. It has psychological, philosophical, and technological implications, which is why this project looks at the dilemma from multiple angles.

The dilemma was originally formulated by Merrill Flood and Melvin Dresher at the RAND Corporation during the Cold War era, and Albert W. Tucker later gave it the standard narrative form that is still used today.

Narrative Setup

Two criminals are arrested and placed in separate cells, unable to communicate with each other. Each prisoner has two options: betray the other prisoner or cooperate by staying silent. Depending on the combination of choices, both receive different sentences.

| (2), (1) | Betrayal | Cooperation |

| Betrayal | Medium sentence for both | (1) long sentence, (2) goes free |

| Cooperation | (2) sentenced, (1) goes free | Light sentence for both |

To make the scenario easier to simulate, the punishment model can be converted into numeric rewards and extended over multiple iterations.

In the adapted version, a banker with a chest of gold coins invites two people to play a game. There is still no communication between them, but the available choices become cooperate and do not cooperate. The winner is determined by the number of coins received.

| (2), (1) | Do not cooperate | Cooperate |

| Do not cooperate | One coin each | The non-cooperating player gets 5 coins |

| Cooperate | The non-cooperating player gets 5 coins | 3 coins each |

In a single-round version, betrayal is trivially the best option because it always provides an equal or better payoff than cooperation. When both players recognize that betrayal dominates and act on it, the situation reaches what is known as a Nash equilibrium, since there is no incentive to change strategy unilaterally.

However, real life rarely works like a one-shot game. Relationships, workplaces, markets, politics, and even ecosystems often involve repeated interactions. In those settings, the key question becomes: which strategy performs best over time?

Robert Axelrod organized an international tournament in the 1980s to study repeated-game strategies. Participants submitted algorithms that played many rounds of the dilemma. The objective was to study how cooperation emerges and survives in a world where individuals still act in their own interest.

The strategies considered in this project include:

- Tit-for-Tat: Starts by cooperating and then mirrors the opponent's previous move.

- Always Defect: Betrays on every round.

- Always Cooperate: Cooperates regardless of the opponent's action.

- Grudger: Cooperates until betrayed, then defects forever.

- Random: Acts without a fixed pattern.

- Forgiving Tit-for-Tat: Like Tit-for-Tat, but sometimes forgives betrayal.

- Pavlov: Repeats the previous move if the reward was high and changes it if the reward was low.

- Cooperate then Defect: Cooperates on even rounds and defects on odd rounds.

- Win Stay Lose Shift: Keeps the previous move after a good payoff and changes after a poor one.

With enough computational support, the project ran a simulation of 1,000 rounds. It was written using Neovim, Python, and object-oriented programming. The code remains available in my repository for anyone who wants to review or extend it.

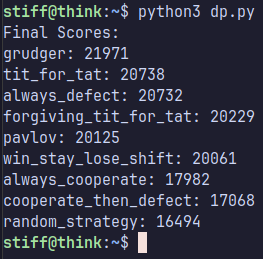

The ranking in this particular run looked roughly like this:

- Grudger

- Tit-for-Tat

- Always Defect

- Forgiving Tit-for-Tat

- Pavlov

- Win Stay Lose Shift

- Always Cooperate

- Cooperate then Defect

- Random

That ranking should not be treated as final, because the simulation only tested a limited set of strategies. Even so, it offers a useful starting point for a broader interpretation, especially when combined with ideas from The Evolution of Cooperation by Robert Axelrod and William Hamilton.

| Strategy | Psychology | Philosophy |

|---|---|---|

| Tit-for-Tat | Encourages trust and reciprocity. | Reflects a balance between utilitarian ethics and Kantian morality. |

| Always Defect | Explores selfishness and betrayal risk. | Represents the dilemma of Hobbesian rational egoism. |

| Always Cooperate | Examines altruism and vulnerability. | Models social contract thinking or absolute morality. |

| Grim Trigger | Studies emotional rigidity. | Raises the debate between punishment and forgiveness. |

| Random | Reflects decision-making under uncertainty. | Connects with ideas of free will. |

For Axelrod, the Prisoner's Dilemma is more than a theoretical puzzle; it is a practical tool for understanding the dynamics of cooperation and competition in human systems. Across repeated interactions, long-term success does not come from pure selfishness or pure altruism, but from a flexible balance between the two.

The most successful strategies in this dilemma suggest that:

- Starting with cooperation helps create trust and opens the door to mutual benefit.

- Responding with reciprocity reinforces healthy relationships and discourages disloyal behavior.

- Being adaptable is essential for dealing with change, mistakes, and misunderstandings.

- Forgiveness, when used reasonably, helps repair relationships after conflict, while firmness prevents exploitation.

These ideas go far beyond theory. They apply to everyday life, from personal relationships to politics, economics, and ecology. In a world where individual interests often collide with collective ones, the Prisoner's Dilemma reminds us how valuable it is to pursue cooperation without losing sight of our own goals.

In the end, this problem teaches that there is no single rigid solution. Real success comes from learning, adapting, and evolving in response to the environment and the decisions of others. That is what makes this dilemma such a powerful model for understanding both human behavior and strategic systems.